Darzi Tests

Disclaimer: Dr No wishes it to be known that in no way does he condone calling the well intentioned but flawed Darzi tests Stasi tests. Any attempt to do so is very clearly a mendacious attempt to discredit a Noble Lord and his well intentioned but flawed tests, and cannot be permitted under any circumstances. Should any such typo appear in this post, having escaped rigorous proof reading, Dr No makes his fullest apologies, begs forgiveness, and wishes it to be abundantly clear that any instance of Stasi test should of course always be read to mean Darzi test. No such reservations, however, apply to any suggestion that the tests are only fit to be disposed of, preferably with the aid of several litres of fast running water (hereinafter ‘the Khazi tests’).

A friend of Dr No’s has been invited to do a Darzi test. These are the DIY nose and throat swabs for covid–19 RNA, being done as part of a nationwide survey for covid–19, or more accurately, covid–19 RNA, dead or alive. Sponsored — whatever that means — by Lord Darzi, the robot surgeon cleverly disguised to look like a human being, the survey is part of REACT, a joint Imperial College/Ipsos Mori study commissioned by the Department of Health and Social Care that, in the ponderous official language of the study’s FAQ, ‘[seeks] to improve understanding of how the COVID-19 pandemic is progressing across England’. Sounds a bit like Onward Christian Soldiers, or rather Onward Covid Soldiers. So far, so commendable.

Though no doubt well intentioned, the programme is flawed. It may even have more flaws that a sewer has rats, but for this post Dr No is going to consider only two major flaws, that are, each of themselves, sufficient to call the programme into serious question. The first is practical, and has to do with the test used, and the second is ethical, and has to do with informed consent.

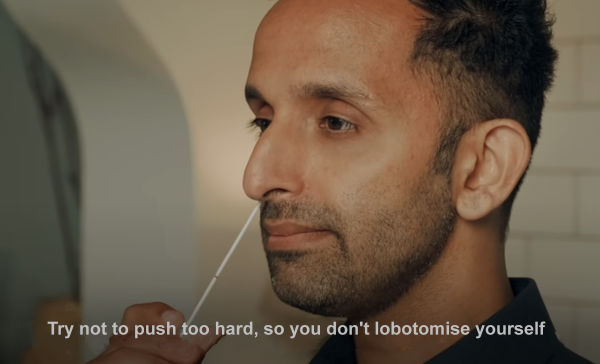

The survey will take in 100,000 individuals, randomly selected from NHS GP lists across England. The individuals are invited by letter to take part and if they agree — in passing we might note that if they don’t agree, the arm twisting and heavy breathing begins — they are then sent a DIY nose and throat swab kit. Dr No’s friend dallied a bit, had her arm twisted, and now has a kit, which Dr No has seen. The kit has a swab, which after use is collected by pre-arranged courier, and taken to a lab. The lab then processes the swab using a RT-PCR test, which tests not for the virus, but for viral RNA. These tests can be remarkably sensitive, making it possible to detect residual RNA from a now non-infectious person, a state of affairs that has been rather neatly likened to detecting a single strand of hair in a room from a person who last visited the room weeks ago. The hair test is positive, but the person is nowhere to be seen.

But the real practical problem with the test is its sensitivity, the measure of how well it correctly detects the presence of RNA, and its specificity, how well it correctly detects the absence of viral RNA. No test is 100% accurate all the time. If we test 100 people, all of whom do have the condition of interest, and 90 correctly get a positive result (and 10 a negative result), then the test has a sensitivity of 90%; likewise, if we test 100 people, all of whom don’t have the condition of interest, and 95 correctly get a negative result (and five a positive result), then the test has a specificity of 95%. The 10 negative results in those with the condition are called false negatives, and the five positive results in those who don’t have the condition are called false negatives.

Both false negatives and false positives are a major headache. Because of the way the numbers work, as we shall see, the positive predictive value, which is the percentage of people who test positive who actually have the condition, rapidly becomes feeble when the condition being tested for is relatively rare. The heart of the problem is that if you do a test which might appear to have reasonable sensitivity and specificity on a large number of people who don’t have the condition, then the false positives start to stack up, and can very soon outnumber the true positives, sometimes greatly so.

Let us consider an example, using the Darzi survey 100,000 samples. Because this is a random sample, only a very small percentage will actually have covid viral RNA present. We don’t know what this percentage, the real world prevalence, is, so we have to make a best guess. It might be 1% (one in a hundred) or 0.05% (one in two thousand). Let’s run with 0.1% (one in a thousand). That means, if there are 100,000 samples, 100 of them truly have covid viral RNA, and 99,900 do not.

You can see where we are going with this, Even a low false positive rate on a large number — 99,900 in our example — is very quickly going to add up to a large number. Let us give our RT-PCT test a sensitivity of 90% (definitely optimistic) and a specificity of 98% (probably about right), and run the numbers, as we can do here (if you want to verify Dr No’s numbers, enter them in the second table down, noting the percentages are scaled to 1, so for example 90% is entered as 0.9: prevalence 0.001, sensitivity 0.9, specificity 0.98, sample size 100,000, and then click the compute button).

What do we find? In the top left table, and the diagram to the right, we see that of the 100 real cases, 90 have been correctly identified (90% sensitivity), and 10 have been missed — given the all clear when in fact they are a case — and so we have 10 false negatives. Worrying, perhaps, but then look what happens to the 99,900 who aren’t cases: 97,902 are correctly identified as not being cases (98% specificity), but the flip side 2% of the 99,900 non-cases wrongly identified as cases when they are not add up to a staggering 1998 false positives.

The result is a huge overestimate of the true prevalence. Because we set the figures, we know (in this example), that the true prevalence is 0.1% (one in a thousand), and so 100 individuals in our 100,000 sample. Yet the survey tells us there are 100 true positives plus 1998 false positives. This is the small percentage of a large number effect, giving us an apparent, and wildly inaccurate, 2098 apparent cases, and so an apparent prevalence of 2.1%. Instead of one in a thousand, the survey says we have 21 in a thousand, an eye watering twenty-fold plus increase in prevalence. Perhaps, the cynic in Dr No might be inclined to suggest, this is the perfect way to claim ‘there’s a lot of it about‘, when in fact there isn’t.

These numbers alone should tell us that the Darzi tests are really only fit for the khazi, but for completeness, Dr No is also going to consider an important ethical flaw. Since the tests go out to a random sample, most of whom will be asymptomatic, the tests, though part of a survey, are also in effect screening tests. We also know that for all tests, but particularly for screening tests, informed consent is paramount. The information that gives rise to informed is considerable, and includes, among many other things, information about the consequences of a positive test. In the event of a positive covid viral RNA test, what was done as a screening/survey test will morph into a diagnostic test, and the individual will be treated as a true case — even if they are one of the 1998 false positives — and will be subject not just to their own self-isolation, but also to the full panoply of contract tracing, with strict requirements for close contacts to self-isolate for up to fourteen days.

Dr No’s friend had no idea such consequences will happen if she tests positive, because the test letters and bumph that she received make no mention of these consequences, at least so far as Dr No and his friend could see. Being not informed about the consequences, which are real (and can be verified on Imperial College’s online FAQ, and Ipsos Mori’s FAQ, neither of which most people will not read in full, if at all), means that Dr No’s friend cannot give informed consent. This makes the survey unethical. The Darzi tests are twice over really only fit for the khazi.

Will the RT-PCR tests give interesting answers if the swabs are coated in piss? Because if I’m ‘randomly selected’, that’s what they’re getting.

It’s quite obvious these tests, the lock-downs, face nappies, etc., are no longer about the bug (if they ever were), but about keeping control and subduing opposition. Unfortunately the recent London demonstration was infiltrated by lunatics, such as Icke, 5G nutters, anti-vaccine and climate ignoramuses, etc., which is a shame as it dilutes what could have been a strong message.

Very well (and compactly) explained! The trouble with arguments of this kind is that they involve that frightening substance, which politicians and the broad masses avoid like the plague (so to speak): mathematics.

Being somewhat educated, the appearance of some simple arithmetic does not frighten me. At most I sigh internally as my mind rolls up its sleeves preparatory to doing some actual thinking – much as an unmechanical driver sighs on noticing a flat tyre on a rainy day in the middle of the countryside.

While I don’t think the consequences of false positives could be explained any more simply and clearly than you have done here, I strongly suspect that most politicians, quite a few business executives and NHS managers, and the great majority of the British public will either take the conclusions on trust – or, more likely, reject them as they would brush off a nasty bug crawling on their leg.

I like your spirit Ed P!

[P being the operative letter in your case of would be test sample submission].

Non of the above, from a non-mathematical person is surprising. The over prevalence conveniently fits their narrative. Propagate the myth, disguise the over reaction and incompetence, relative to the threat.

Yesterday’s well reported round the table footage with Johnson and his cabinet members reminded me of another 007 metaphor — it could have easily been (insert a white cat, though Boris’ mop will do) a meeting of S.P.E.C.T.R.E — though Blofeld and his cohort would have done much less harm than orchestrated so far by the British government.

I’ve been waiting years for someone to get the “P” joke! (Piss from my head/brain)

Thank you all for your comments. Dr No doesn’t have a mission statement, but if he did, it would probably contain something about trying to explain numbers using words, and sometimes graphics, rather than numbers, as much as possible. Like many doctors, Dr No is not naturally numerate but, having done some epidemiology, he has had to grapple with and do his best to understand numerical thinking and he has almost always ended up resorting to using words to get there.