A Sage Moment

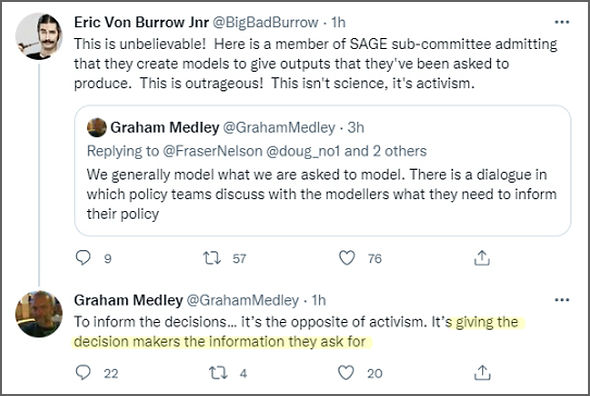

It’s not often that Dr No’s flabber gets well and truly ghasted. An extraordinary exchange on twitter (scroll down a page or so to get to the start of the substance, and click here to see the above tweet) has revealed what many have long suspected: SAGE purposely cook the books in its modelling reports. Graham Medley, professor of infectious disease modelling at LSHTM, and chief pongo for the time being of SAGE’s modelling group SPI-M, defends the group’s practice of ‘giving the decision makers the information they ask for’. Read that again, and let it sink in. The scientists give the politicians the information they ask for. Being on twitter, the discussion quickly becomes scrambled into incoherent fragments, making it almost, but not entirely, impossible to get to the heart of the matter. The crux, however, is simple enough: is SAGE told, one way or another, what to tell the government — which, in effect, soon becomes here’s the policy, now where’s the evidence — or does it provide, as its name, the Scientific Advisory Group, might suggest, independent and impartial scientific advice?

In a properly functioning sensible government, it has to be the latter. Advisers advise and ministers decide, to be sure, but that is predicated on an assumption that the advice is impartial, that is, not partial to any particular view point. The alternative, scientists led by politics, soon puts a country on the road to hell, as the Germans discovered to their cost in the 1930s. It does this because the politicians are in effect asking leading questions of the scientists, and so the answers become skewed away from a sensible appraisal of the risks, and so skewed away from a sensible process of decision making. If ministers ask for, and scientists provide, only models based on bad, very bad and apocryphal assumptions, then, de facto, the models based on less dire assumptions disappear from the table, and with them, the option to make less draconian decisions. By selecting only the bad and worst case scenarios, the books are cooked, in favour of the dire assumption, and the draconian decision.

The discussion, and civilised discussion it is, rather than acrimonious argument, hinges on two rather subtle, even semantic, points. The first is the meaning of a model: is it a prediction, or a scenario, and so the follow-up, more important, question, is it possible to get the two mixed up: that an outcome written as a scenario gets read as a prediction? Dr No has always been clear that modelling is the numerology that makes astrology seem rigorous. You dial in your what-if assumptions, and get your if-this scenarios. There is no assessment of probability at any stage, and so there is no way numerology can make predictions. But such niceties inevitably fail to penetrate the fevered brows of the decision makers. Imagine a gastrointestinal pandemic. If the modellers — at the request of the decision makers — produce only a doom laden dossier of diarrhoea, dehydration and death, then the decision makers are going to find it very difficult — because this is how the human mind works — not to read the dossier as a dossier of predictions, and so act accordingly. It is in a way a version of the map precedes the territory, or, as Dr No sometimes says, if all you have is bloody nails, then every tool becomes a hammer.

There is a very simple solution to this problem: the modellers model all possible scenarios, or, at a minimum, a representative range. Instead of modelling just nails, also model nuts, bolts, screws and glues. Instead of mapping only a part of the territory, map the whole territory. That ensures the decision makers can see there are alternative outcomes, or scenarios, and so alternative decisions that can be made, including, in the case of a temporal transient epidemic, the decision not to do anything. What if the omygodicron scariant causes substantially, perhaps even by a factor of ten, milder disease? That is the clear way to avoid cooking the books in favour of drastic and draconian decisions, and it leads directly to the second, and in many ways, more baffling point: why does Medley explicitly argue in favour of producing tool boxes that only contain nails, making every tool in the box look like a hammer, and maps that only cover part of the territory, ensuring that other routes, and other destinations, disappear from view.

Perhaps Medley has some form of expressive dysphasia. But if we take his words in the twitter discussion at face value, what we have is a reasoning that suggests two things. The first is that SAGE themselves have decided models based on milder assumptions don’t matter, because the don’t lead to (active) decisions. If they don’t change the outcome, there is no need to include them, because the decision makers aren’t interested: ‘Decision-makers are generally on (sic) only interested in situations where decisions have to be made,’ he tweets, and then adds, ‘That scenario [one based on mild assumptions] doesn’t inform anything. Decision-makers don’t have to decide if nothing happens.’ The argument is somewhat convoluted, but appears to be: if a scenario won’t produce ‘a result’, ie a decision to do something, then there is no point in including that scenario. This frankly, is bonkers. As every doctor knows, there is always an option to decide to do nothing. We generally call it W&S for short, or ‘wait and see’. To exclude evidence that might lead to a W&S decision is indeed worse than bonkers, it is incompetent.

If excluding the boring scenarios — boring because ‘nothing happens’ — takes the biscuit, then what comes next shatters that independence biscuit into a thousand crumbs. A tweet or two later Medley adds (emphasis added), ‘We generally model what we are asked to model. There is a dialogue in which policy teams discuss with the modellers what they need to inform their policy.’ However this is read, it only adds up to one thing: instead of policy makers led by science, we have modellers led by policy makers: ”We generally model what we are asked to model.” And then, a short while later, we have the tweet Dr No quoted from in the opening paragraph: “To inform the decisions… it’s the opposite of activism. It’s giving the decision makers the information they ask for“. Scientists led by decision makers. Yours it is to ask, ours

There has inevitably been, given this is twitter, some rather furious back-pedalling, a medley of Medley apologists, an outpouring of profanity, and almost certainly some tweets have been retired to the great nesting box in the sky – but the screen grab is ever our friend. The apologists operate on two empty grounds: we should only ever model worst case scenarios, because that ensures we cover any eventual outcome — a framework for decision making taken straight from the devil’s playbook — and various rehashes of Medley’s own argument, no need to model scenarios that don’t lead to action — which all fall foul of the doing nothing is not a decision fallacy. There is also a marginal argument that the modellers need some input from decision makers, so they modellers know what to model — no point in modelling the nuclear option if the government intends never to use that option — but this input must be kept to a minimum, lest it constrain the range, or breadth, of scenarios modelled.

None of these developments alter the essential message contained in this extraordinary series of tweets that among other things explain why covid models always exaggerate outcomes — that’s what happens if you focus on worst case scenarios — and why government always over-reacts — again, that’s what happens if you focus on worst case scenarios: the pandemic has been managed not by politicians led by science, but by scientists led by the politics. A sage moment indeed.

Footnote: a key player, if not the key player, in the opening up of this debate is Fraser Nelson, editor of the Spectator. Here is his take on the implications of what Medley has said. Dr No has also added (at 13:00h) a link in the first paragraph to the tweet contained in the image.

SAGE collusion, coupled with Falsi’s ‘regulatory capture’, tame PIs and Gates’s money, it’s somewhat unsurprising we’re in this mess.

How did Democracy decay to Oligarchy to Fascism right under our noses?

Essentially Medley has confessed to being a peddler of porky pies.

SAGE lies, the nation dies.

One can juggle Medley’s phraseology to one’s heart’s content but that’s the gist of it. I commend the treatment of Admiral Byng to your attention (while admitting that Byng was less guilty than this shower).

I could barely believe this encounter when I first read it yesterday. Kudos to Fraser Nelson for teasing it out. Also says a lot about journalism today that you only get real answers when people naively believe they’re off record. And a reminder that when news men do their job, and do it well, their job is hugely important.

The revealing Medley/Nelson exchange fills me with hope that the sheer intellect and curiosity of man will eventually tear this whole charade down.

I remember, back to March 2020, watching Fergus Walsh on the BBC news describing the Imperial College graph of R value vs NPIs, exclaiming “Look at the effect of LOCKDOWN!!!” I wondered at the time if the audience would realise that the “effect” is based entirely on the assumptions input to the model.

As Shawn says above – kudos to Fraser Nelson. And kudos to you Dr No for your all your analysis and continuing determination to cut the wheat from the chaff. Please keep going!

I think that the truth will come out in due course – maybe in a year or two. Unfortunately, I don’t believe that any consequences will follow. Will the British public consider their own deprivation of freedom for a few years more serious than the deliberate murder of more than a million Iraqis and the destruction of their society?

As far as I can see quite a lot of the public consider lockdowns as rather a lark – a sort of extended holiday – and are quite willing to believe that the “vaccines” and masks are not only beneficial but necessary.

I have always been rather a skeptical, if not cynical, fellow; but the events of the past two years have given me a deep and abiding contempt for most of my fellow humans. My highest values are truth and love, both of which have been abandoned and trampled underfoot.

Dr No should have given Fraser Nelson much greater credit and prominence than just a mention in a footnote. He also suspects that Nelson knew exactly what he was doing, and did it with great skill, quickly drawing out the essentials in public from a man who has always struck Dr No as not the most worldly of men.

All in all, that short exchange on twitter of all places should be a watershed moment. Put aside all the other stuff swirling around, what that exchange reveals beyond any reasonable doubt is that ‘the science’ is compromised. That means nothing less than the whole basis for the government’s response to covid is compromised. As dearieme puts it so well, SAGE lies, the nation dies. Yet the extraordinary – or perhaps it isn’t extraordinary at all, given very strange times – thing is the vast majority of the population and all the MSM, bar the Spectator, have ignored the exchange and its implications. Very bizarre. But, if you live in a mad world, then perhaps even madness seems normal…

This has been going on for years – perhaps forever. Think of the report that Tony Blair demanded from the intelligence services, as “evidence” for his decision to attack Iraq and help to kill over a million civilians.

Time after time he repeated the demand, and time after time he received a bland report making it clear that Iraq posed not the slightedt threat to the UK. Then John Scarlett (now Sir John Scarlett, ahem) entered the picture and almost the following day Blair had the “scenario” he wanted. Boom!

‘Scarlett took on the role of head of the JIC one week before the September 11 attacks.[8]

‘The normally secretive intelligence services were thrust into the public gaze in the Summer of 2003 after the death of the eminent government weapons expert, Dr David Kelly. Kelly had been found dead in the Oxfordshire countryside near his home, after being exposed as the source of allegations that the government had “sexed-up” intelligence regarding existence of weapons of mass destruction in Iraq prior to the 2003 invasion of Iraq. The “classic case” was the claim that Iraq could launch Weapons of Mass Destruction “within 45 minutes of an order to do so”—Dr Kelly had privately dismissed this as “risible”.[9]’

https://en.wikipedia.org/wiki/John_Scarlett

The laughably incompetent and implausible Skripal affair and its Dawn Sturgess pendant showed that the apparatchiks had gained immensely in confidence and contempt for the public’s intelligence. With a story line that would have been turned down as too ridiculous and inconsistent for “Dr Who” or a James Bond movie, it was lapped up by the media and most of the public, and apparently proved that the UK should prepare for war against Russia.

I must say that I completely agree with Reg, who left the last comment in the Twitstream quoted by Fraser Nelson. While I normally disapprove of bad language, especially in public, this is a situation in which violent swear words seem unavoidable.

It’s not just the admission that political decisions of immense import are being justified by “scenarios” that are no more likely than any SF plot. It’s the utter insouciance (to borrow Paul Craig Roberts’ extremely appropriate word) with which Professor Medley not only shrugs off responsibility but actually asserts that he can’t see the problem! I am strongly reminded of MAD Magazine’s Alfred E. Neuman with his idiotically complacent grin and trademark query, “What, me worry?”

What is missing in all this is accountability. I have long wished to see someone highly visible keep track of all claims and predictions – especially those on which governments act to our detriment – and reviewing them regularly to assess how accurate they were. Professor Ferguson, for example, should have been publicly pilloried every time he published an outlandishly wrong forecast – and after the third time, he should have been ridiculed and barred from any role in public affairs. Journal editors should laugh when they receive a paper from him, and throw it in the bin sight unseen.

Good news on the poo front:

http://claytonecramer.blogspot.com/2021/12/things-you-find-in-sewage.html

From the moment HMG abandoned sane pandemic plans for lockdown, the role of modellers with their scare prophecies has been about engineering mass product take-up Edward Bernays-style.

I keep thinking that whereas the essence of science is absolute (if necessary brutal) honesty, the essence of politics and business is deception.

Thus when politicians take over science, the latter simply ceases to be of any value.

Although I cannot find it at the moment, I feel sure that Paul Dirac once said something that deserved to be accompanied by a flourish of trumpets. Asked by someone whether he was not concerned about the moral or religions implications of something he had said, he replied, “I am a scientist, not a nursemaid”.

That sums it up perfectly. A scientist must find the facts, without fear or favour, quite regardless of whether others like them or not.

However, once a politician has announced that black is white, so it must be eternally. It is carved in granite.

@Tom: you may blame the pols. I suspect they are being manipulated by the bureaucrats of science and medicine. And, of course, by people who know nothing of either science or medicine such as the Astrologer Royal.

My guiding rule is, “Follow the money”. (Although those in search of power are just as much to be dreaded).

I read in “The Daily Sceptic” today that for the first time, senior government ministers are actually examining the data themselves, rather than simply accepting whatever SAGE tells them.

The members of SAGE and other (very unwise) groups are to blame for providing rotten advice. But the politicians are the ones who chose to accept it sight unseen.

Agree, follow the money, and those greedy for power, are always good starting points. Ministers on the other hand are in an unenviable position, caught between the devil and the deep blue sea. Most have no understanding of science, so when they look at one of SAGE’s doom scenarios, they are in effect reading the tea leaves. The High Priest of Tea Leaffery tells them the tea leaves are the oracle: what is the poor minister to do?

Apply common sense, of course. That is what we pay them to do. Here, part of common sense includes considering that the High Priest of Tea Leaffery is but a witch doctor, casting dangerous spells. Awareness of the High Priest’s track record doesn’t do any harm either: a history of casting bad spells is not a good sign. And never forget that a decision to do nothing is still a decision.

Tom – all scientists, even the best of them, are human, all too human, and so are subject to biases, some known, some unknown. That’s why the default position in science has to be scepticism, and it’s why we need replication. Even with all the checks and balances in place, a lot of dud science gets published. Given the process of science is flawed, insofar as it produces dud results, it could be said that those that follow the scientific method are in fact acolytes of their own religion, a religion called science. It is as well for scientists never to forget that possibility: that they too worship, but at a different altar. They believe in the objective impartiality of science, but can they know that they are not looking at the world through a vast invisible prism that distorts reality?

That said, science does have the redeeming (curious how another religious word pops up) feature: it spends a fair amount of time trying to prove itself wrong, a practice rather conspicuous by its absence from normal religious practice.

The astounding thing about the Medley tweets is the explicit, rationalised exclusion of milder outcome scenarios. Dr No finds this so obviously a bad idea that he struggles to even grasp why many have jumped to Medley’s defence – remarks along the lines that ‘obviously you don’t include milder scenarios because in those scenarios nothing happens’. They ignore the fact that deciding to do nothing is still a decision.

Dr No suspects these pro-Medley types are the same people who believe it’s just fine to start the Y axis on charts at any convenient value ‘so you can zoom in on the area of interest’, not realising that their zooming in can seriously distort the visual message of the chart (eg on a zoomed chart Y appears to double, on unzoomed chart we can see it only went up around 10%).

The (un)SAGE practice of only presenting only scenarios with worse outcomes is no different to a surgeon who only considers bad outcomes for his or her patients, and excludes milder outcomes, because ‘nothing happens’. To this surgeon, every patient with abdominal pain is a peritonitis scenario, and each must have their abdomen opened up. The possibility that the patient just has wind is excluded from consideration, because, given a wind scenario, nothing happens. Bonkers, or what?

I think SAGE and Imperial College might have been the driving force behind the panic in this country initially. Being charitable I’ll say that all promoters of the pandemic think its too late to stop and if the people find out what they’ve done they will at least suffer political suicide if not literal death. On the other hand their might be a hidden hand that is driving the whole circus for what could be nefarious reasons of which their are numerous.

Ed’s Note: apologies for delay in approving, Xmas/New Year etc